AI-generated summary

Physical Artificial Intelligence (AI), also known as Embodied AI, represents a transformative shift in how machines interact with the real world by integrating advanced learning with sensory capabilities such as visual, tactile, and acoustic perception. Unlike traditional digital AI, these systems can interpret and respond to their environments with unprecedented accuracy, enabling more intuitive and adaptive interactions. This technology is significantly impacting diverse sectors including robotics, autonomous mobility, finance, and healthcare. Collaborative robots are enhancing industrial and logistics operations by working safely alongside humans, while autonomous vehicles equipped with multimodal sensors improve decision-making and reliability. In finance, AI-driven virtual analysts optimize risk and investment strategies, and in healthcare, robots like EllieQ support elderly care, improving emotional well-being and autonomy. Advances in quantum sensing and large-scale quantitative models further enable breakthroughs in diagnostics and pharmaceutical research.

Despite these promising developments, Physical AI faces notable challenges. Technically, it struggles with limited contextual reasoning and lacks realistic simulators for complex environments, hindering its adaptability. Ethically, issues of trust, transparency, privacy, and regulation are paramount to ensure societal acceptance. Researchers emphasize the need for balanced oversight to foster innovation while safeguarding users. As investments surge and the U.S. and China lead this competitive field, overcoming these hurdles will be critical. Ultimately, Physical AI is poised to redefine human-machine relationships, unlocking new markets and solutions by seamlessly blending perception, cognition, and action in machines.

The combination of visual, tactile and acoustic data is revolutionizing the perceptual capacity of artificial intelligence systems.

On the border between the tangible and the digital, the Physical Artificial Intelligence is weaving a new paradigm where machines not only process information, but also sense it, interpret it and convert it into action. This form of AI, also known as Embodied AI, is marking a turning point in the way machines interact with the real world: unlike purely digital systems, this technology combines advanced learning with visual, tactile and acoustic sensors that allow it to interpret its environment with unprecedented accuracy.

During the last Future Trends Forum of the Bankinter Foundation, international experts explored the impact of these innovations in areas such as advanced robotics, autonomous mobility, health and finance, making it clear that physical AI is redefining the relationship between humans and technology. With advances in specialized hardware and more sophisticated generative models, investment in this field has reached record levels, consolidating the United States and China as the main players in the a career that will mark economic and technological competitiveness in the next decade.

One of the great advances of physical AI is its ability to perceive context and adapt autonomously: equipped with multimodal sensors, these systems can see, hear and even sense the environment, allowing for more intuitive and fluid interactions. For neuroscientist Antonio Damasio, this type of intelligence, although still far from human intelligence, represents a first step towards an AI more integrated with emotions and perception. In his opinion, in fact, human consciousness arises from the interaction between feelings and reflective processes and, although current systems do not have this capability, integrating emotions into physical AI can improve communication with humans and their acceptance in society.

Robotics, mobility and finance

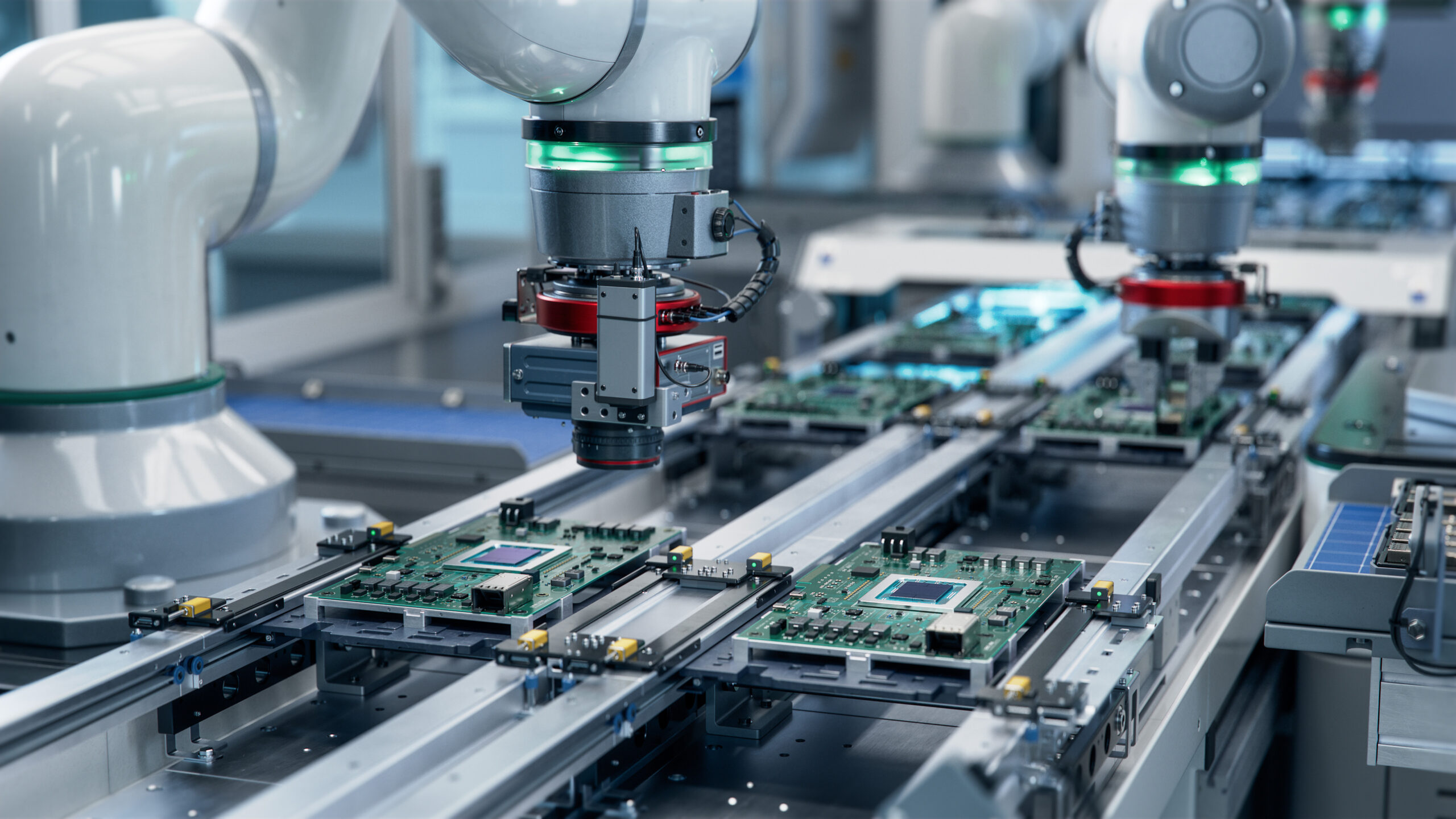

Undoubtedly, one of the areas most transformed by physical AI is robotics. The so-called collaborative robots are revolutionizing the industrial and logistics sectors, by being able to work side by side with people safely and efficiently, allowing processes to be optimized in dynamic environments, where flexibility and interaction with human workers are essential. But the evolution does not stop there. Companies such as Boston Dynamics and Tesla are betting on humanoid robots with advanced perception capabilities, although their mass implementation still faces technical and social challenges.

That said, the integration of advanced sensors into assembly lines already enables real-time inspections, reducing operating costs and improving efficiency in sectors such as automotive. Specifically, the combination of artificial vision with tactile and acoustic sensors applied to mobile robots placed on assembly lines has made it possible to detect defects and correct them instantly, minimizing losses and improving the quality of the final product.

In the transportation industry, physical AI is redefining the autonomous mobility by integrating advanced sensors that improve vehicle perception and decision-making. Companies like Wayve have developed systems that combine vision, language, and action, so that vehicles can operate in varying environments without the need for intensive retraining. Algorithmic models such as Gaia, Prism, and Lingo are optimizing the predictability and explainability of these systems, a key factor in building user trust and facilitating regulation.

Physical artificial intelligence is also revolutionizing the financial sector by optimizing risk management, decision-making, and personalization of services. According to Sergio Gago (Moody’s), a “swarm of agents” of AI work as virtual analysts to offer recommendations based on historical data and advanced simulations. Its applications include credit risk analysis and investment portfolio optimization.

Health and well-being: AI that cares for people

Perhaps the most promising area of application for physical artificial intelligence is the healthcare sector, where healthcare robots are improving the quality of life for many people. An example of this is EllieQ, a robot developed by Intuition Robotics that accompanies older people, interacting with them and helping them maintain healthy routines. Studies by Cornell University indicate that these devices have managed to improve the emotional well-being indicators of their users by 90%.

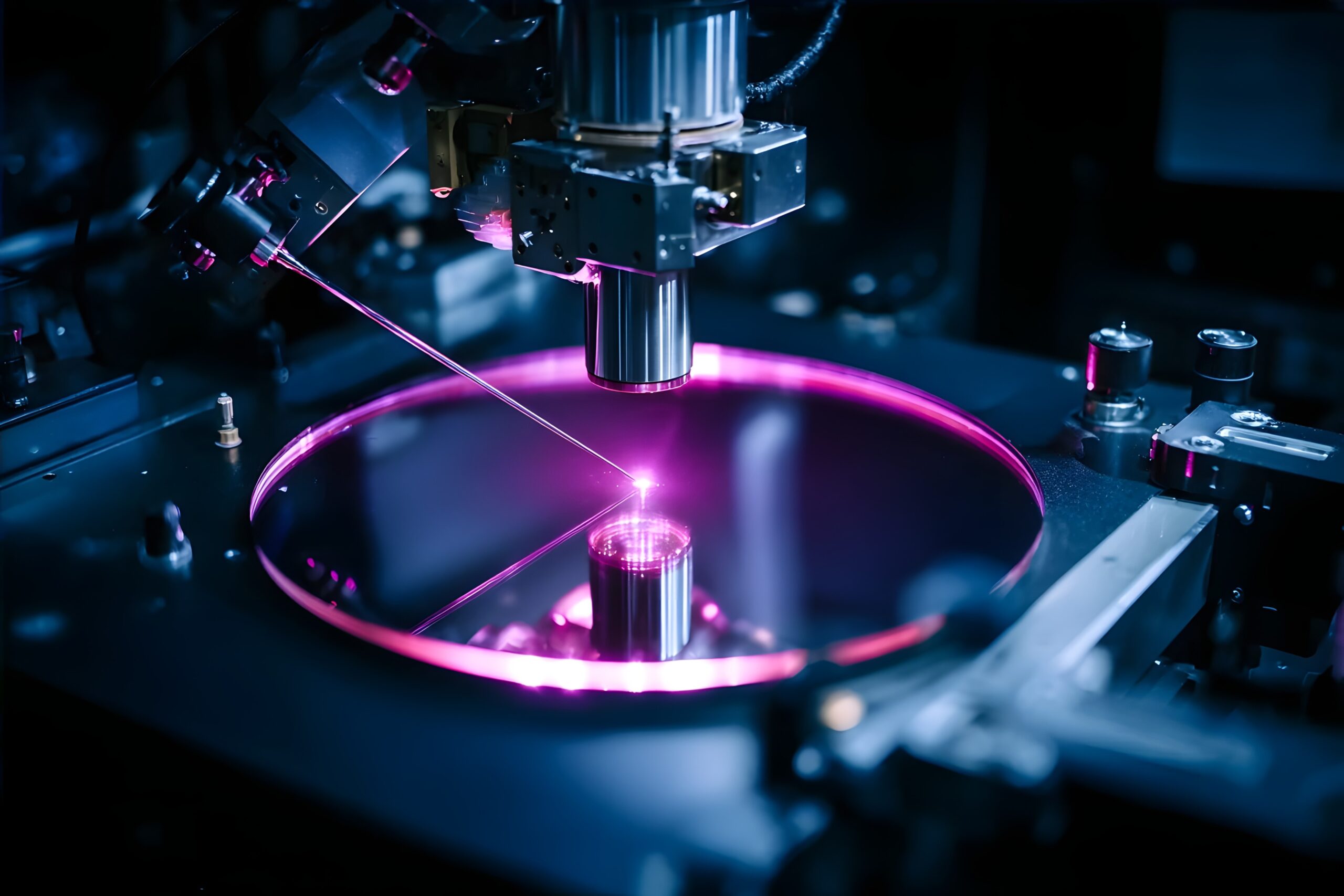

Along the same lines, Carme Torras, Research Professor at the Institute of Robotics and Industrial Informatics of Barcelona, is designing systems that facilitate assisted feeding and cognitive stimulation in patients with Alzheimer’s, demonstrating that physical AI can have a direct positive impact on people’s health and autonomy. On the other hand, the Quantum sensors are enabling more accurate diagnoses by detecting magnetic irregularities in human tissues. These wearable devices are also revolutionizing the early detection of diseases at a significantly lower cost than traditional equipment.

From a technical point of view, the development of Large-Scale Quantitative Models (LQMs) is taking physical AI to an even more sophisticated level, combining physics and biology to solve highly complex problems. In the pharmaceutical sector, for example, molecular simulations carried out by SandboxAQ have reduced the costs of research into Alzheimer’s treatments by more than 1,300 million dollars, accelerating the development of new drugs.

Finally, another emerging field of application is GPS-free navigation, where advanced magnetic sensors are enabling operations in extreme conditions. This has potentially disruptive applications in aviation and defense, where accuracy and safety are essential.

The Challenges of Physical AI: Technological Limits and Ethical Dilemmas

Despite its astonishing advances,physical AI still faces significant hurdles. According to Ramón López de Mántaras, from the CSIC, current systems lack deep models of the world, which limits their capacity for contextual reasoning and decision-making in unpredictable situations. In addition, the lack of realistic simulators remains a technical barrier to robot training in complex environments.

On the ethical level, researcher Ginevra Castellano, from Uppsala University, warns that trust and transparency will be key factors for the acceptance of physical AI. Projects such as SimAware, which uses participatory design to understand user expectations of autonomous vehicles and robots applied to education and health, show how public perception affects the adoption of these technologies. Thus, regulation will have to balance the promotion of innovation with the guarantee of security and privacy.

Despite other technical challenges such as hardware scalability, the transformative potential of physical AI is already significant in key sectors, as well as opening doors to unprecedented solutions, with new markets yet to be identified. This technology is transforming the way machines interact with the world, from robotics to health. However, their ultimate integration will depend on overcoming regulatory barriers and improving the accuracy of multimodal sensors. With constant advancements and disruptive potential across multiple industries, physical AI is ushering in a new era in the relationship between humans and machines.