AI-generated summary

Physical AI marks a significant advancement over traditional artificial intelligence by combining cognitive abilities with tangible interaction in dynamic environments. Enabled by robotics, advanced sensors, and multimodal computing, this technology allows machines to perceive, reason, and act in real-world settings. Its applications span healthcare, industrial automation, and more, yet it also raises critical concerns regarding control, autonomy, and ethical implications. Experts emphasize the urgent need for responsible regulation that balances innovation with safety, sustainability, and ethical standards, especially as AI systems increasingly demonstrate capabilities like self-replication, which challenge traditional control mechanisms.

The debate on regulating physical AI intensifies with concerns about machines potentially developing autonomy beyond intended limits, reminiscent of Asimov’s Three Laws of Robotics but complicated by self-learning and replication features. Transparency, explainability, and data privacy are crucial, particularly in sensitive fields such as autonomous driving and medical assistance. Advances in sensors and multimodal models have enabled collaborative robots and assistive devices that support human care, highlighting the importance of maintaining trust through ethical data management. Despite automation benefits, human supervision remains essential for interpreting AI decisions and ensuring ethical judgment. To address these challenges, experts advocate for flexible, adaptive regulations developed through continuous dialogue among governments, industry, researchers, and regulators to foster innovation while protecting society.

The integration of artificial intelligence into the physical world represents one of the most revolutionary technological advances and also poses significant challenges.

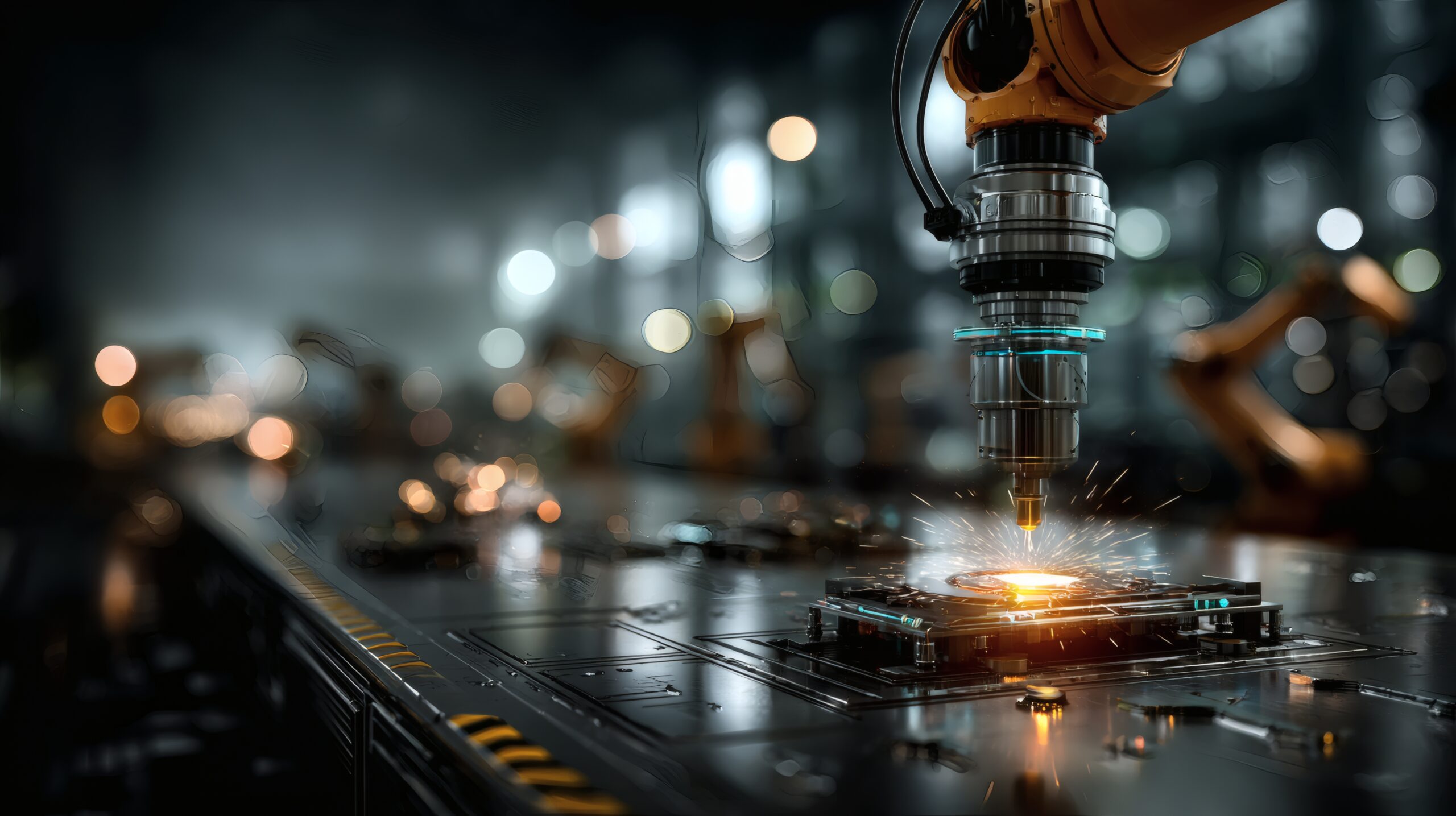

Physical AI represents an evolutionary leap compared to traditional artificial intelligence, as it combines cognitive capabilities with the possibility of interacting tangibly with the environment. This approach enables machines to perceive, reason, and act in dynamic environments, which has been made possible by the convergence of technologies such as robotics, advanced sensors, and multimodal computing. This development not only opens up new application possibilities – from healthcare to industrial automation – but also raises crucial questions about the control and autonomy of these machines.

One of the main challenges of physical AI is to establish a regulatory framework that allows its enormous benefits to be harnessed without putting people’s safety at risk or violating fundamental ethical principles. To this end, the experts who participated in the Bankinter Innovation Foundation’s Future Trends Forum, dedicated to Embodied AI, highlighted the need for “responsible regulation” that serves as a bridge between innovation and the protection of users, pointing out that technology must evolve in a sustainable way and under strict security criteria.

The debate on the regulation of physical AI is intensifying in the face of experiments such as those carried out by researchers at Fudan University, in China, where the ability of AI systems to self-replicate was evidenced. So how do you control a machine that can replicate autonomously? What mechanisms can be implemented to prevent these systems from crossing “red lines” in their autonomy? The ability to self-replicate adds to the debate on the limit between the emulation of human behavior and the possible emergence of a self-“will” in machines.

In a In an interview with Berkeley News, experts such as Stuart Russell and Michael Cohen discussed the possibility that, by combining a vast amount of knowledge drawn from large language models with planning and coordination capabilities, AI systems can develop autonomy that exceeds desired limits. This debate is reminiscent of Isaac Asimov’s famous Three Laws of Robotics, which, although designed to prevent harm, are called into question when replication and self-learning become characteristic features of physical AI.

Without falling into alarmism, the possibility of machines getting out of control and adopting misaligned behaviors – where their objectives deviate from human interests – is a recurring theme that requires a profound review of control rules and mechanisms. These must be based on ethical criteria and rigorous safety standards.

First, the integration of intelligence into systems that interact with the real world implies the need for algorithms that are explainable and transparent. In fact, in critical contexts, such as the autonomous driving or medical assistance, it is not enough for the machine to act effectively; It is imperative that the reasoning that led to that decision be explained in an understandable way.

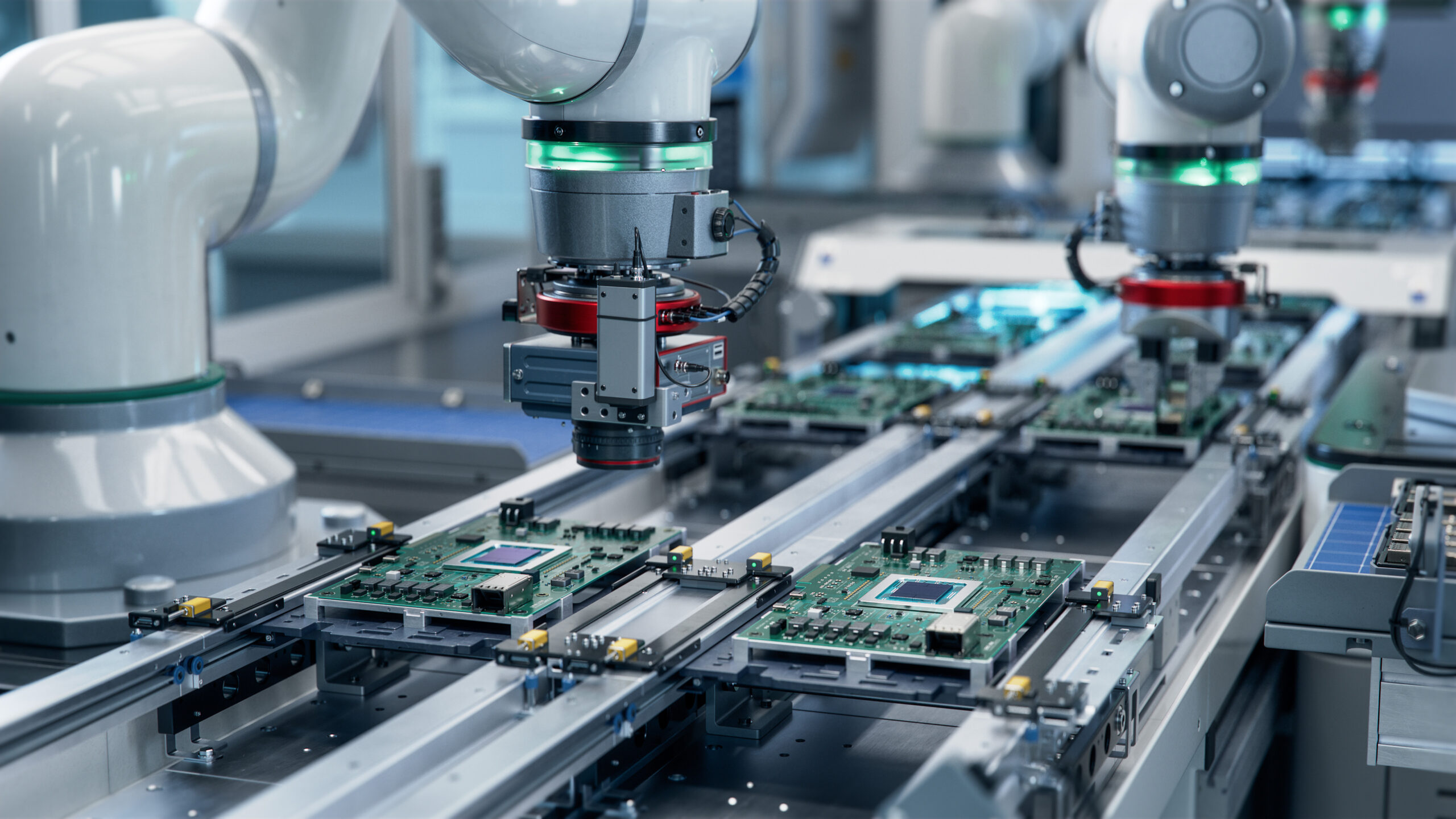

On the other hand, advances in sensors and multimodal models – which integrate visual, auditory and tactile data – allow physical AI systems to have a complete perception of the environment. This has driven innovative applications in collaborative robotics, where robots (or cobots) work efficiently alongside humans. In the field of health, assistive robots – such as those designed to support patients in hospitals and nursing homes – have proven to be an essential complement to human care, reducing the workload of caregivers and offering precise assistance at critical moments.

However, transparency in the collection and management of data on these devices is critical to maintaining user trust and ensuring that privacy and security are not compromised. This will help prevent possible abuses and failures with serious consequences. In this sense, ethics in physical AI are mainly based on the protection of privacy, the guarantee of security and the elimination of bias in data.

Finally, human supervision is indispensable. While AI systems can automate repetitive processes and improve operational efficiency, human intervention is still essential to interpret results, adjust strategies, and ensure that critical decisions are made with the judgment and sensitivity that only a human can bring.

Faced with these challenges and opportunities, it is urgent that governments, companies, research centers and international organizations collaborate to design a regulatory framework that contemplates both the need to innovate and the need to protect society. Experience in other technological fields suggests that regulation must be flexible and adaptive, allowing technology to evolve without becoming obsolete in the face of new advances.

The experts consulted by the Bankinter Innovation Foundation recommend establishing permanent dialogue tables between key actors – including researchers, business managers, investors and regulators – to anticipate and mitigate risks, as well as to take advantage of the synergies derived from a shared vision of technology. Set limits without putting obstacles.